Onboarding Redesign for AI application

Reinventing Onboarding for Presentations.ai

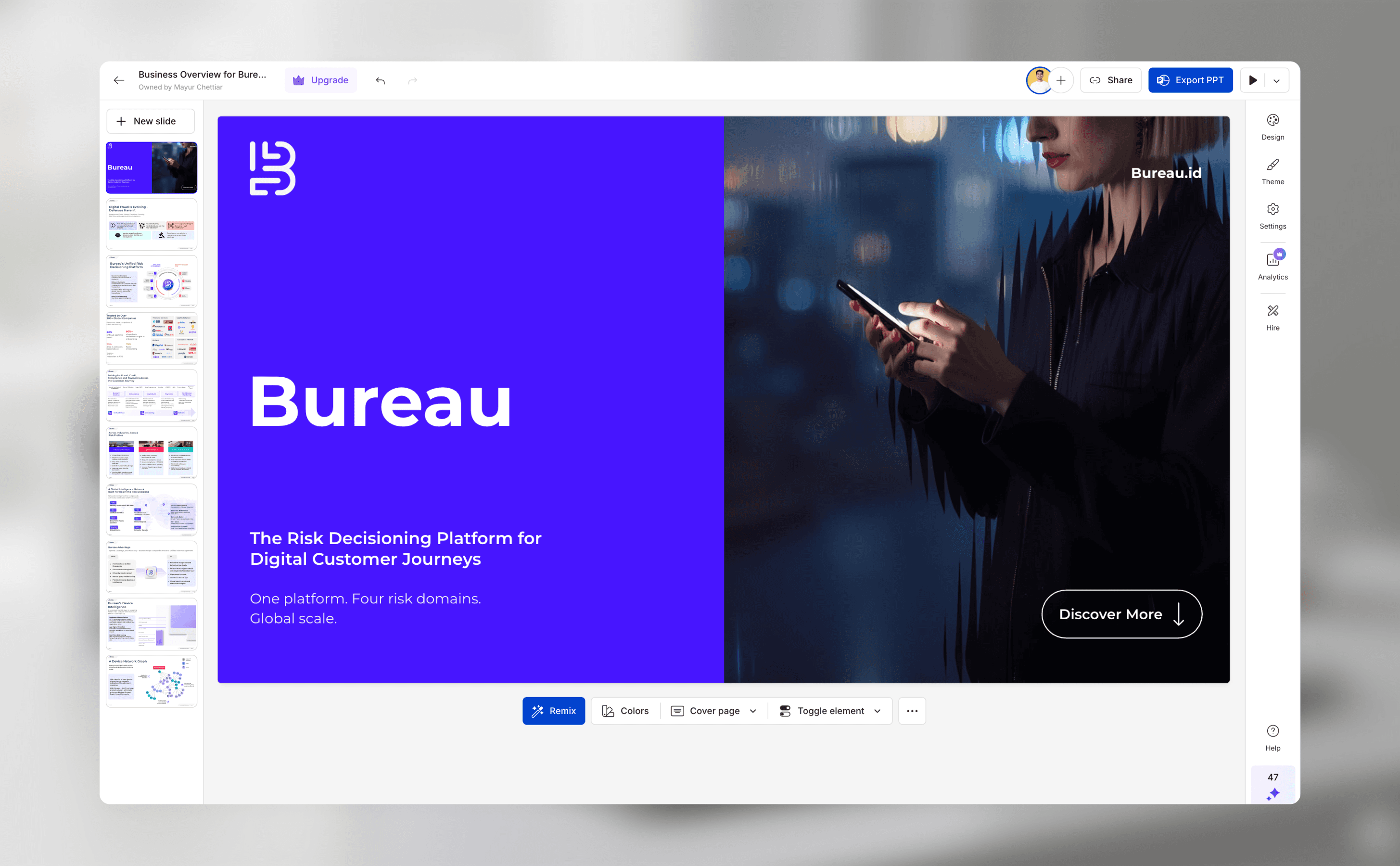

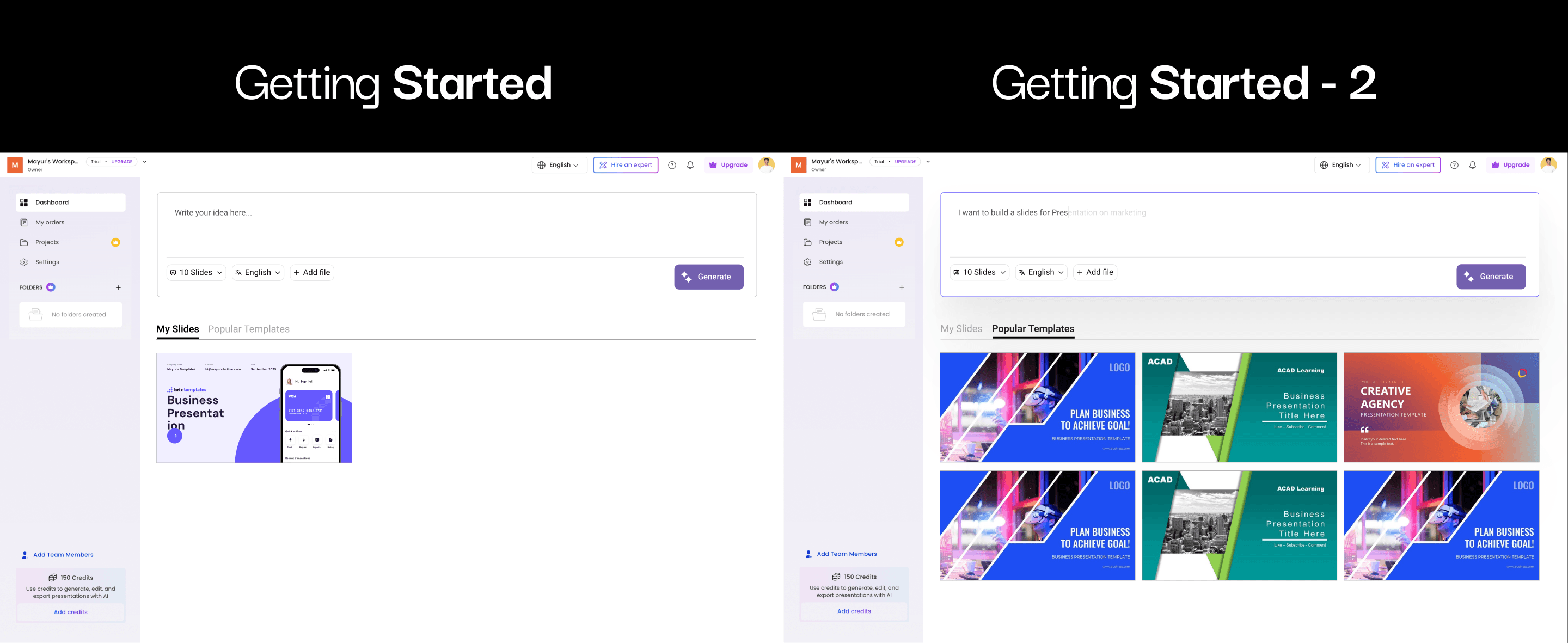

The team at Presentations.ai, an AI presentation maker, wanted to enhance their onboarding experience to better serve their growing base of non-designer professionals. They asked me to help identify how the flow could more effectively capture the right content context without slowing users down. Through research and testing, I discovered that the existing role-based questions weren’t providing enough meaningful input for the AI to generate high-quality first presentations.

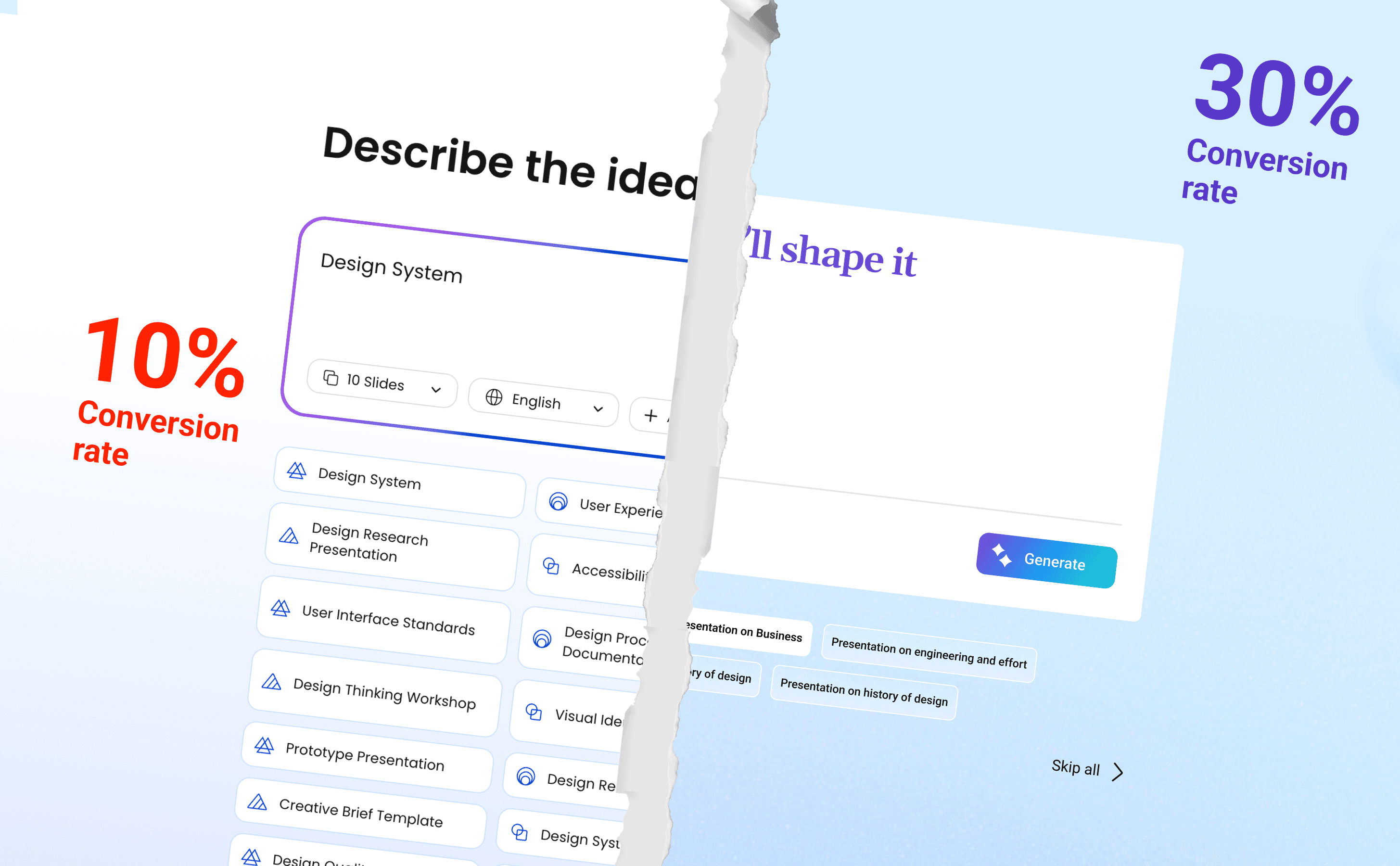

My initial solution focused heavily on speed and design control (Concept 1/2/3), which, while fast, failed to address the LLM's need for content validation. After critical feedback, I adapted my strategy, shifting focus from a designer-centric "speed and look" approach to a content-centric "validation and intent" approach.

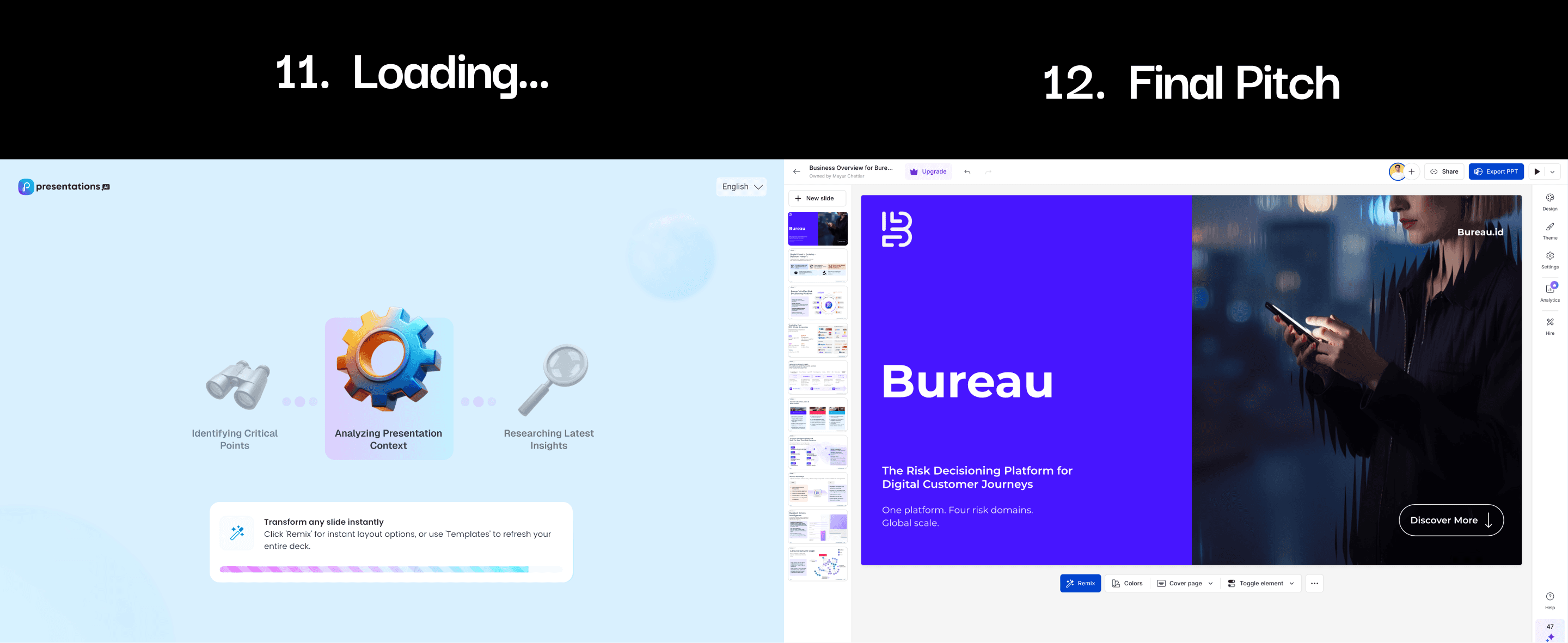

The final redesign strategically replaced generic questions with purposeful data-gathering steps like "Confirm Facts & Figures," which is anticipated to increase the onboarding completion rate by 20-30% and drastically improve the quality of the first AI-generated presentation.

Step 1: Defining the Project

The project focused on improving the initial user experience (onboarding) for presentations.ai, an AI-powered tool that generates presentation slides. Since the core technology relies on large language models, it needed high-quality user input to deliver strong results. In my role as UX/Product Designer, UX Writer, and UX Researcher, I identified key issues in the existing onboarding flow and redesigned it to better support the target user, i.e a busy professional with limited design experience; while aligning with the business goal of increasing user retention.

Step 2: Articulating the Challenge

The original flow was a barrier to entry due to three critical qualitative failures:

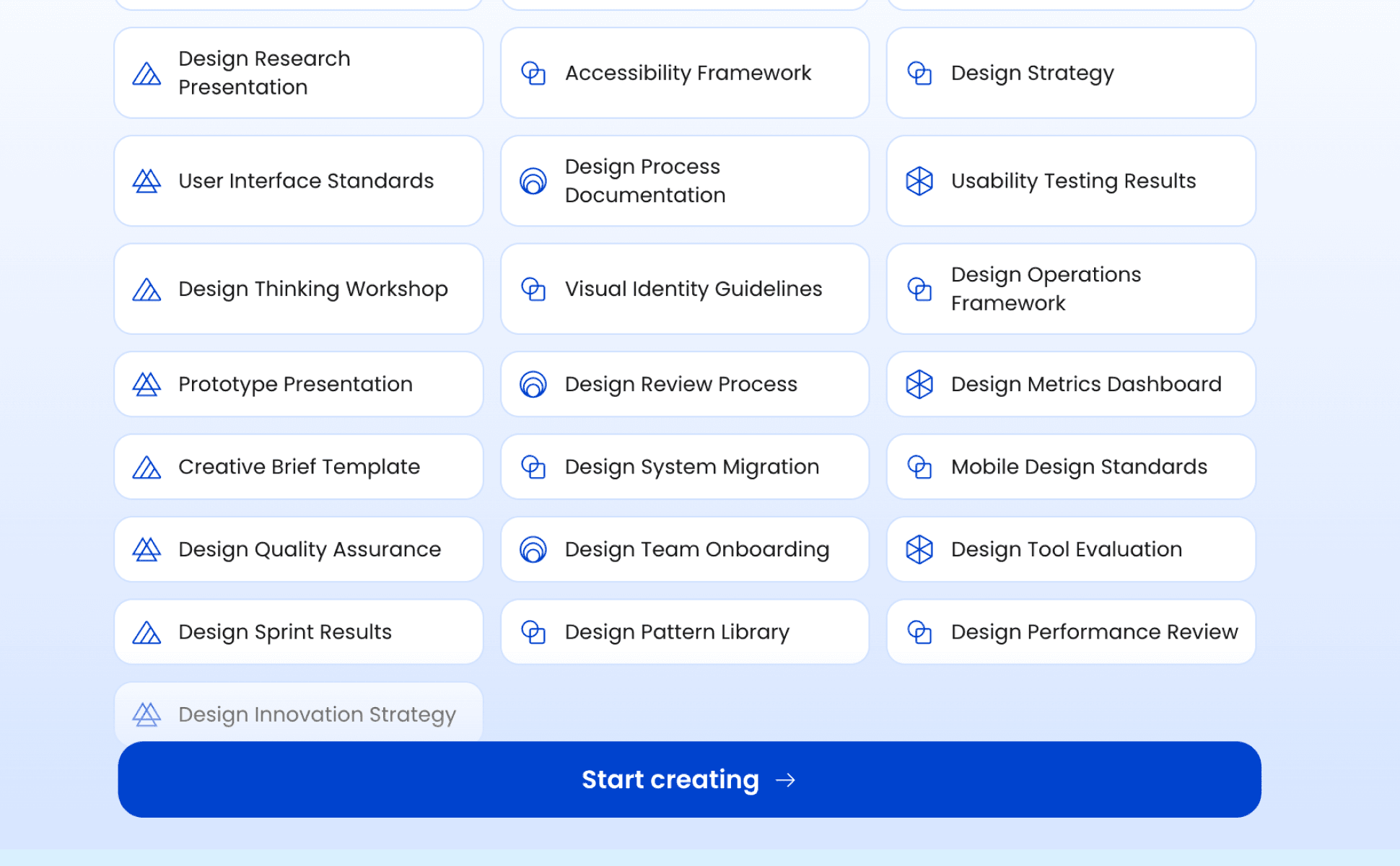

Unjustified Friction and Decision Paralysis: The flow contained numerous mandatory steps and options (e.g., login choices, detailed role/position questions) that imposed high cognitive load, violating Hick's Law, but without delivering proportional value to the user or the AI. This friction was unjustified.

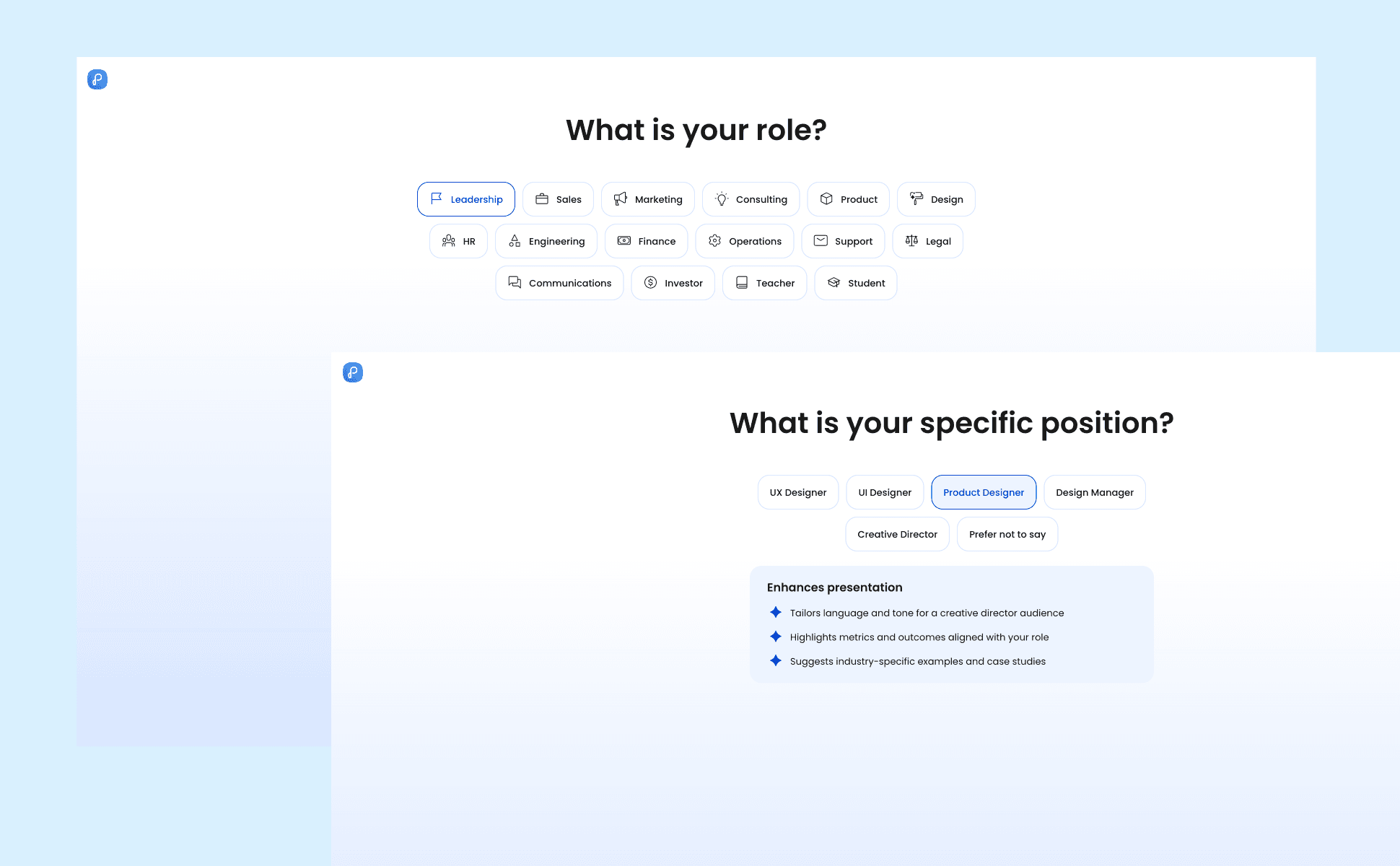

Low-Value Data Collection and Mismatched Expectations: The mandatory questions about "Role" and "Position" yielded low-fidelity data that did not significantly improve the AI’s content relevance. Condensing it to just one screen could have generated similar results.

Post-Hoc Value Barrier (Hope-Based Design): Users were forced through the tedious process only to often receive a generic, low-quality output. Also keeping the close button away from the human eye seemed like a desperate move to make people fill the form even if they are not interested.

Business Impact

The result was high abandonment in the funnel and wasted AI resources (tokens) generating unsatisfactory presentations. This demonstrated a critical need to apply Problem-Solving Skills to bridge the gap between user effort and AI output quality.

Step 3: Setting Measurable Goals

User Objectives

Reduce the perceived effort and unjustified friction needed to generate a first presentation.

Increase user confidence that their input is sufficient to receive a high-quality, relevant presentation (content validation).

Business Objectives

Increase the Onboarding Completion Rate (Goal: ↑ 20-30%).

Improve the quality of the first generated output, thereby boosting user activation and retention.

Gather high-value user intent data (Motivation, Creation Method) to enhance segmentation and feature prioritization.

Metrics of Success

The primary metric is the Onboarding Completion Rate. The secondary metric is the adoption rate of the "Content-rich" input methods (Document/Website upload) compared to simple text prompts.

Step 4: Detailing Discovery

The project focused on improving the initial user experience (onboarding) for presentations.ai, an AI-powered tool that generates presentation slides. Since the core technology relies on large language models, it needed high-quality user input to deliver strong results. I identified key issues in the existing onboarding flow and redesigned it to better support the target user, i.e a busy professional with limited design experience; while aligning with the business goal of increasing user retention.

Research Methods and Insights

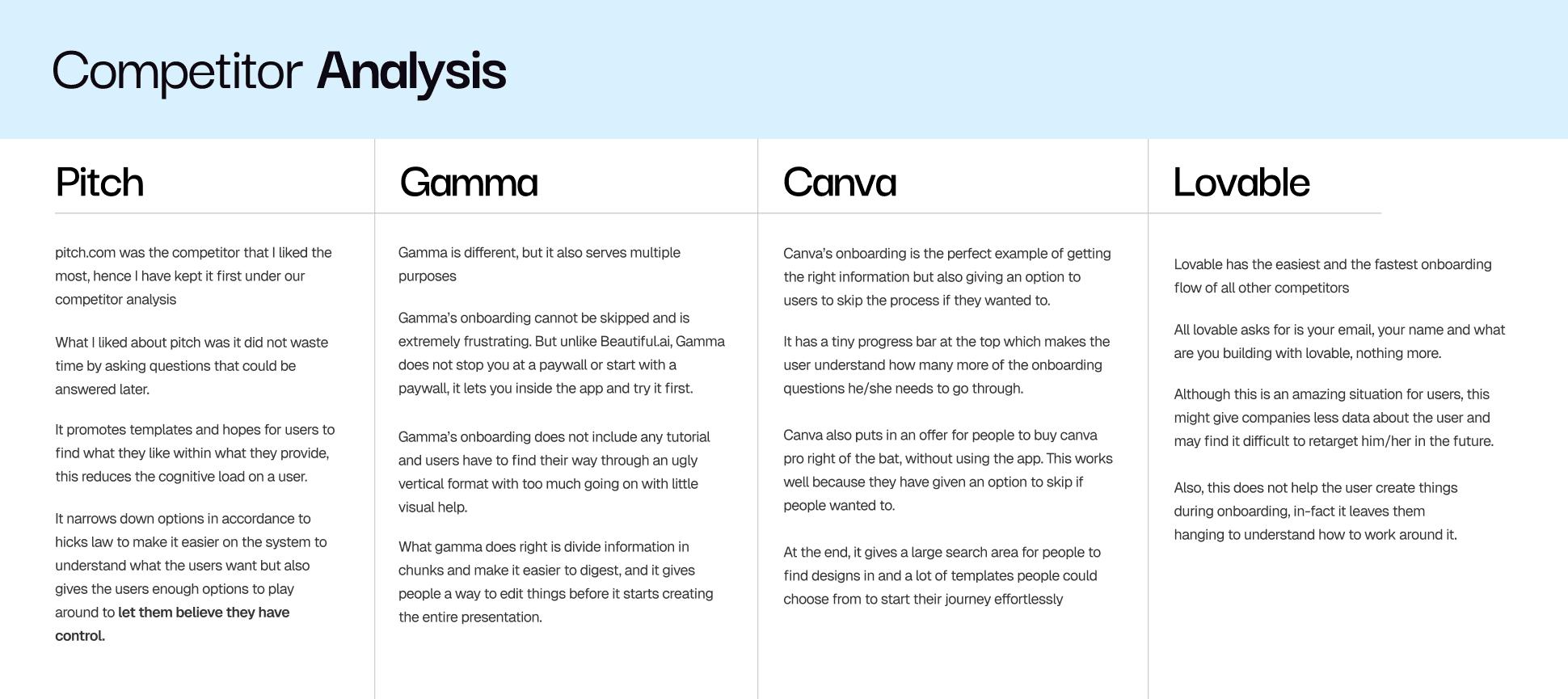

Research included Competitive Analysis (comparing Pitch, Canva, Gamma ), and the critical refinement of user personas (Manager, Sales Head, Copywriter) . Insights showed that competitors excelled at either speed (skip options) or content segmentation (early editing)

Initial Assumptions and The Critical Pivot (Adaptability)

Initial Assumption: My first concepts were influenced by a Designer-Centric Bias, assuming users prioritized speed and visual feedback.

Here's a conversation I had with the SPOC at presentations.ai

Me: Before diving into the root cause, I'd like to clarify the metric. What constitutes the Onboarding Completion Rate in our flow?

Stakeholder: It is the ratio of users who create a project (our activation event) to the total number of users who sign up.

Me: Thank you. So, the 10% drop is primarily driven by a decrease in the number of projects created. This suggests friction in the steps immediately following sign-up. When did this drop begin, and was it a gradual or abrupt change?

Stakeholder: It's been a month, and it was a gradual drop that has stabilized at a 10% loss.

Me: Understood. After ruling out external factors and data integrity, this strongly points to a universal internal change. I recall we were focused on optimizing the speed of the flow. Have we made any recent changes to the steps after sign-up to make the flow faster or shorter?

Stakeholder: Actually, no. Exactly one month ago, we inserted a new screen immediately after the initial Prompt Entry screen to gather more specific context for the AI, such as "Who are you presenting to?" and "What tone would work best?".

Me: That is a critical change. Have we checked the drop-off rate on that new screen?

Stakeholder: Yes, we observed a significant increase in the drop-off specifically on that new contextual gathering screen.

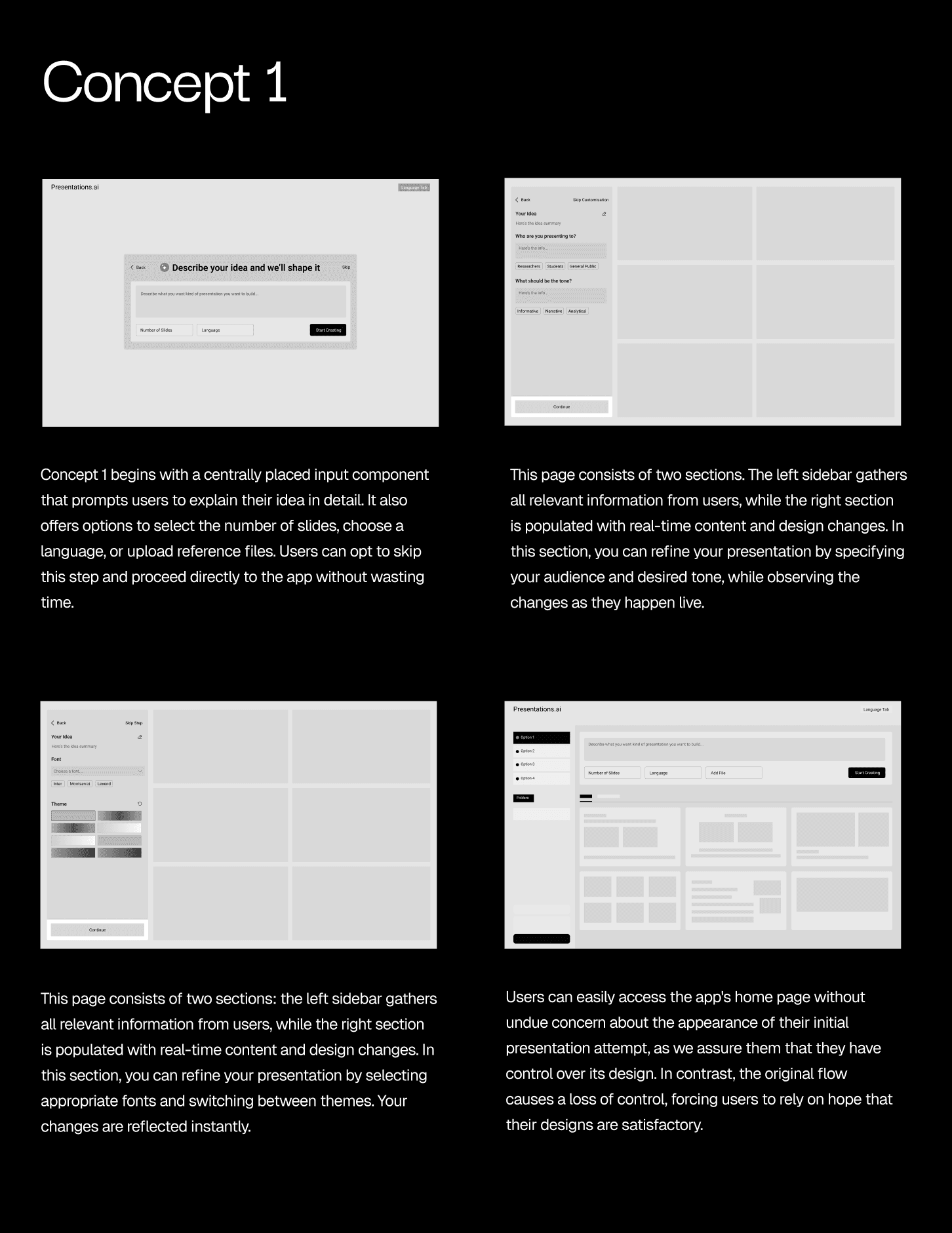

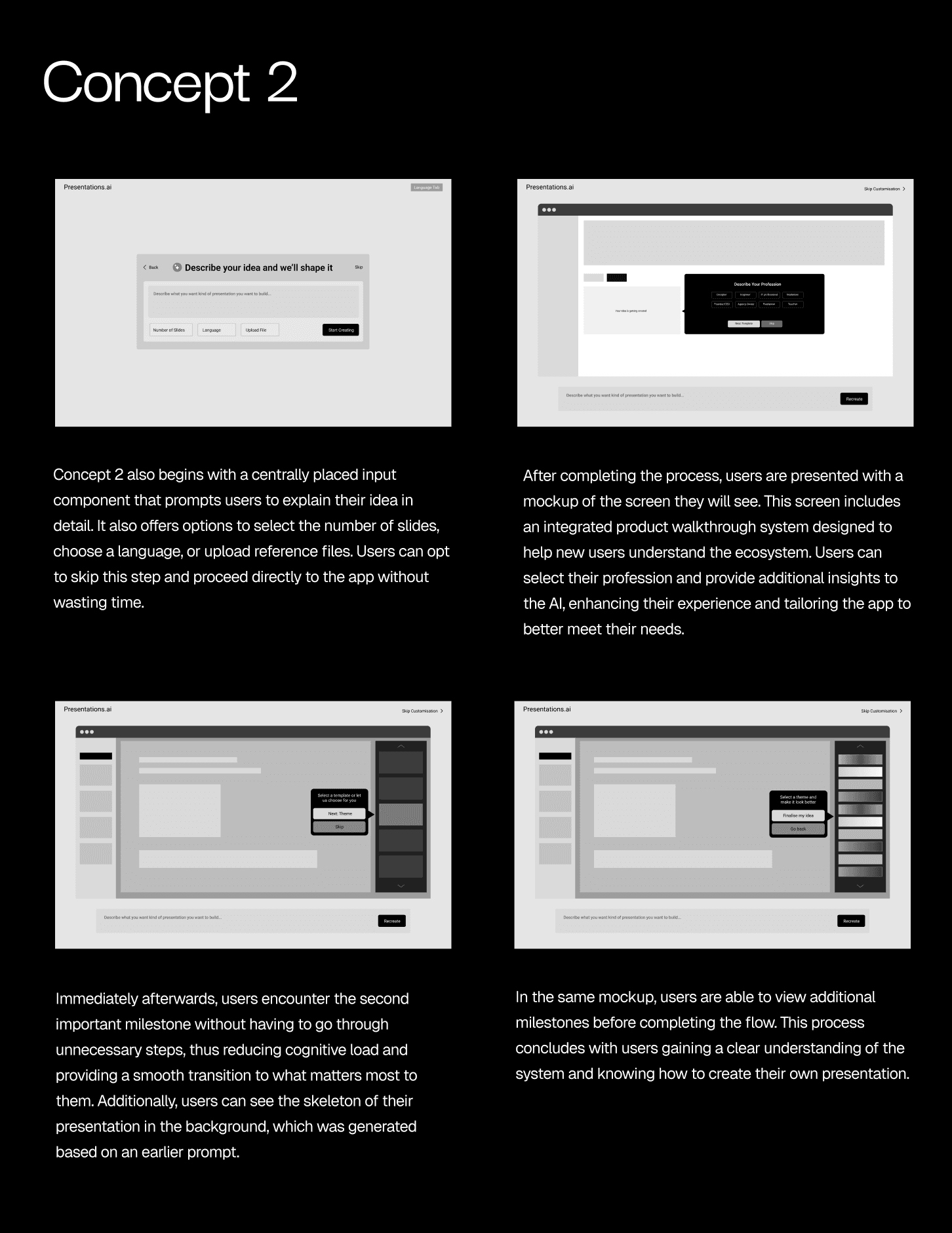

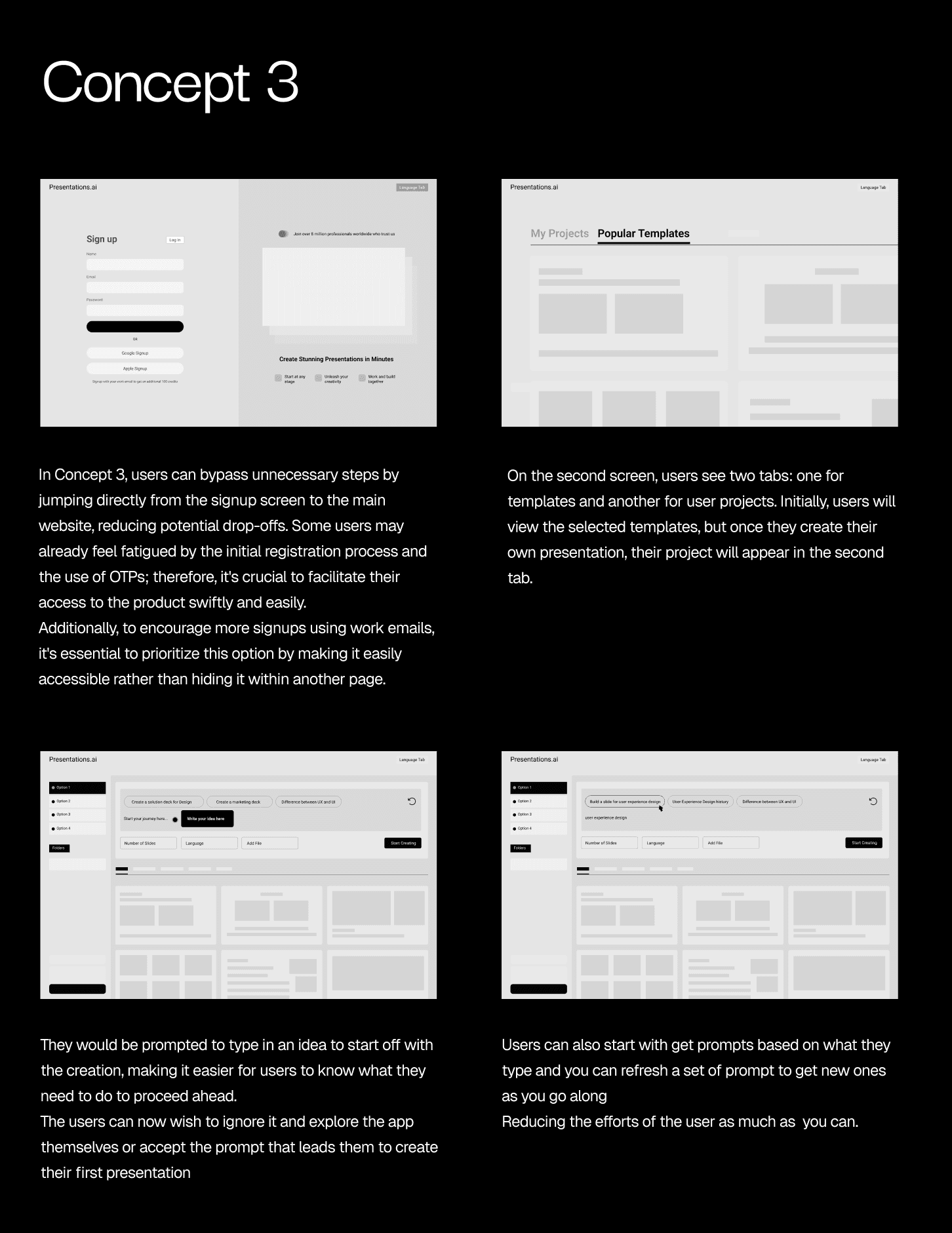

I then came up with three concepts

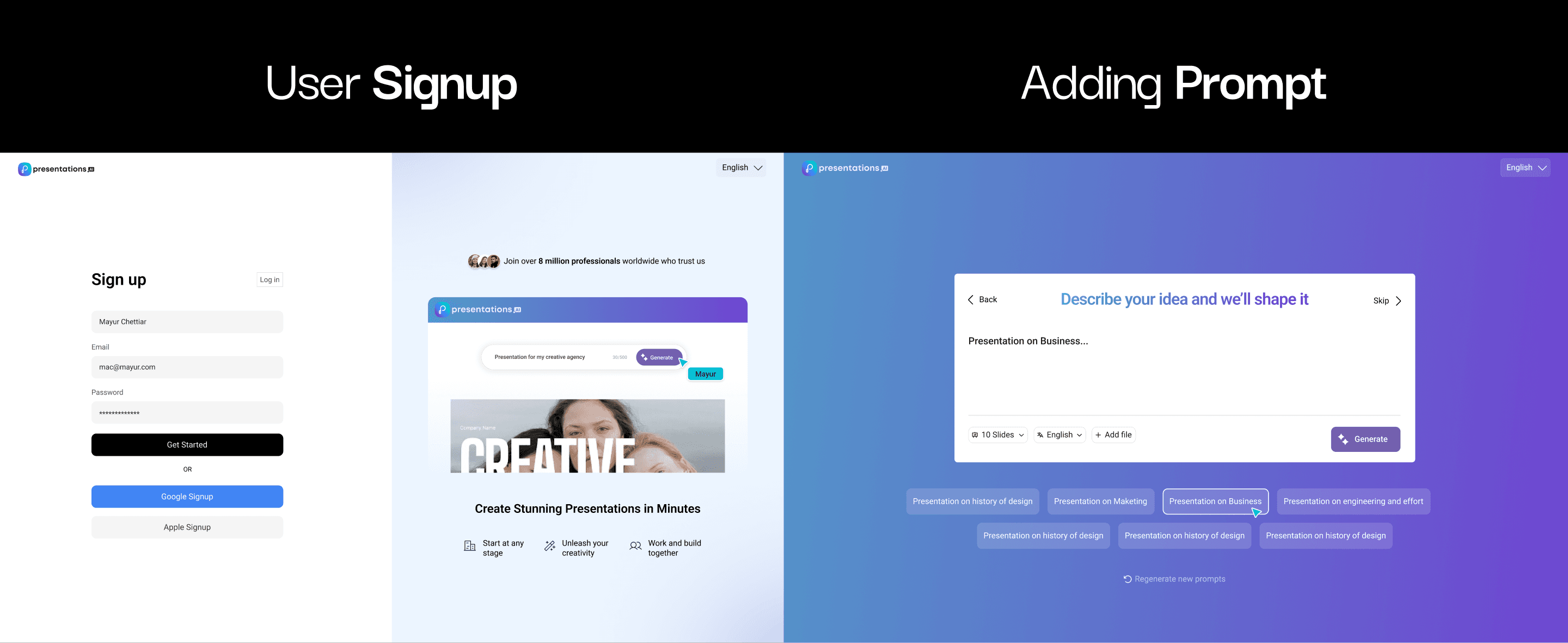

Me: These are the three solutions I have come up with, and you can also see one final flow in high fidelity.

Interviewer: Mayur, to be honest, I don't think this approach will work. While those specific screens weren't highly beneficial, removing this many screens will prevent us from gathering enough information for a quality result. Also, I believe this proposed flow is designed primarily with designers in mind. While they are one of our customer segments, they are not our only customers, and this flow would not be very helpful for non-designers.

Me: Understood, the objective is to enhance AI output, which is achieved by gathering high-value, non-generic data to feed the LLM. Did the data gathered from that screen consistently lead to a measurable improvement in the final presentation's quality?

Interviewer: Interestingly, while the intent was good, our A/B tests showed the data collected from the initial version of those questions (Role/Position, Audience/Tone) was too vague and did not proportionally increase the quality of the final generated presentation.

Me: I think improving the quality of the questions or those screens instead of removing them entirely would make the flow better. I'd like to propose a solution based on that.

Interviewer: Please go ahead

The Pivot: Feedback proved this flawed; the core need for the AI product was Content Validation. This necessitated a strategic Adaptability pivot from a Design-First to a Content-First approach. The flow had to be streamlined without oversimplifying the necessary data collection to avoid the failure of the original flow.

Step 5: Explaining the Solution Evolution

My initial concepts (Concept 1, 2, 3) introduced a "Skip Steps" option and streamlined the prompt entry, but they failed to secure the necessary content context, meaning they also resulted in low-quality output, just faster.

The Final Improved Solution (Post-Feedback Iteration)

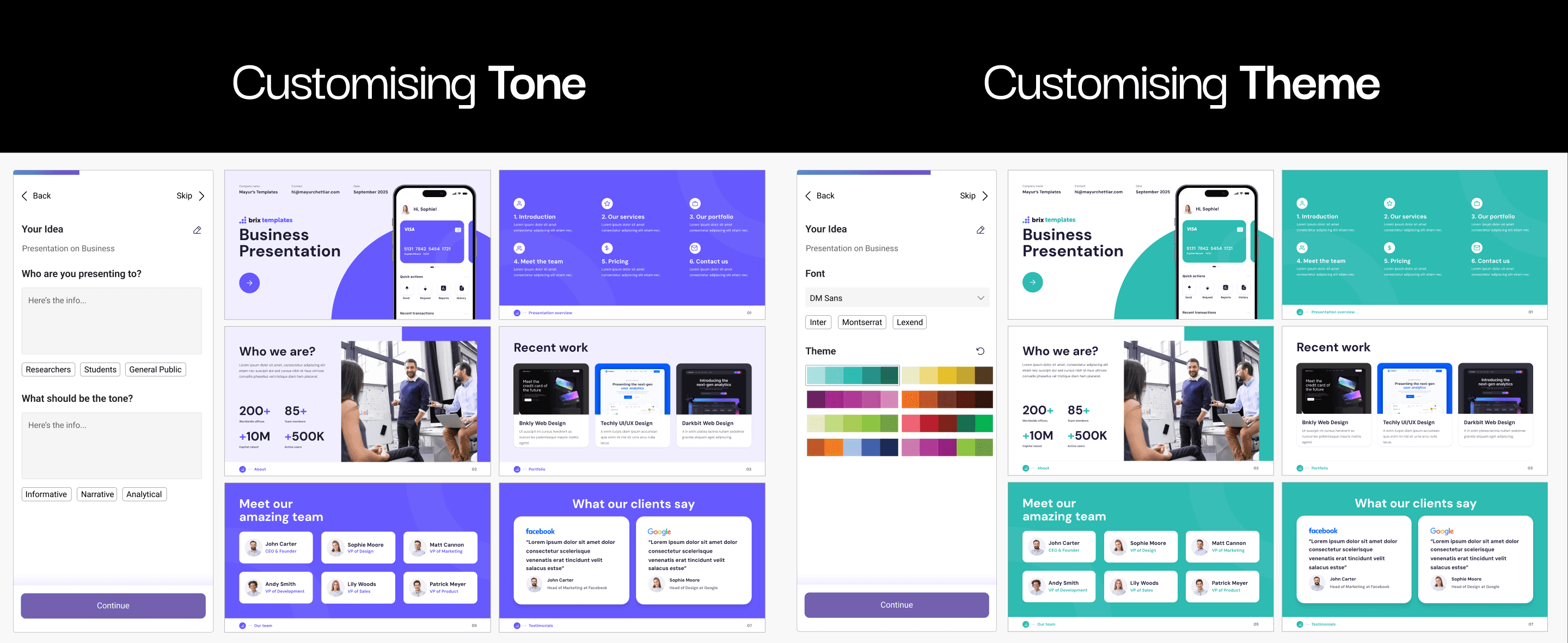

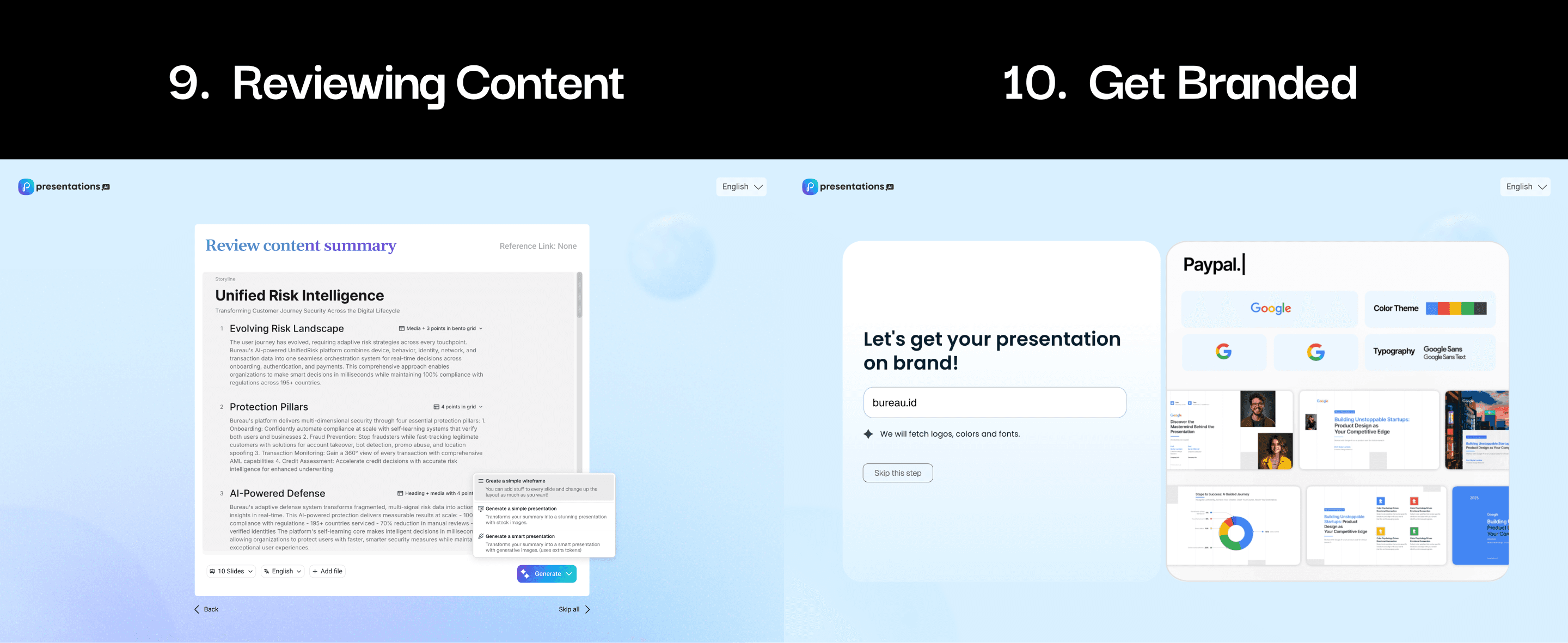

The final flow was built on intentionality and content validation:

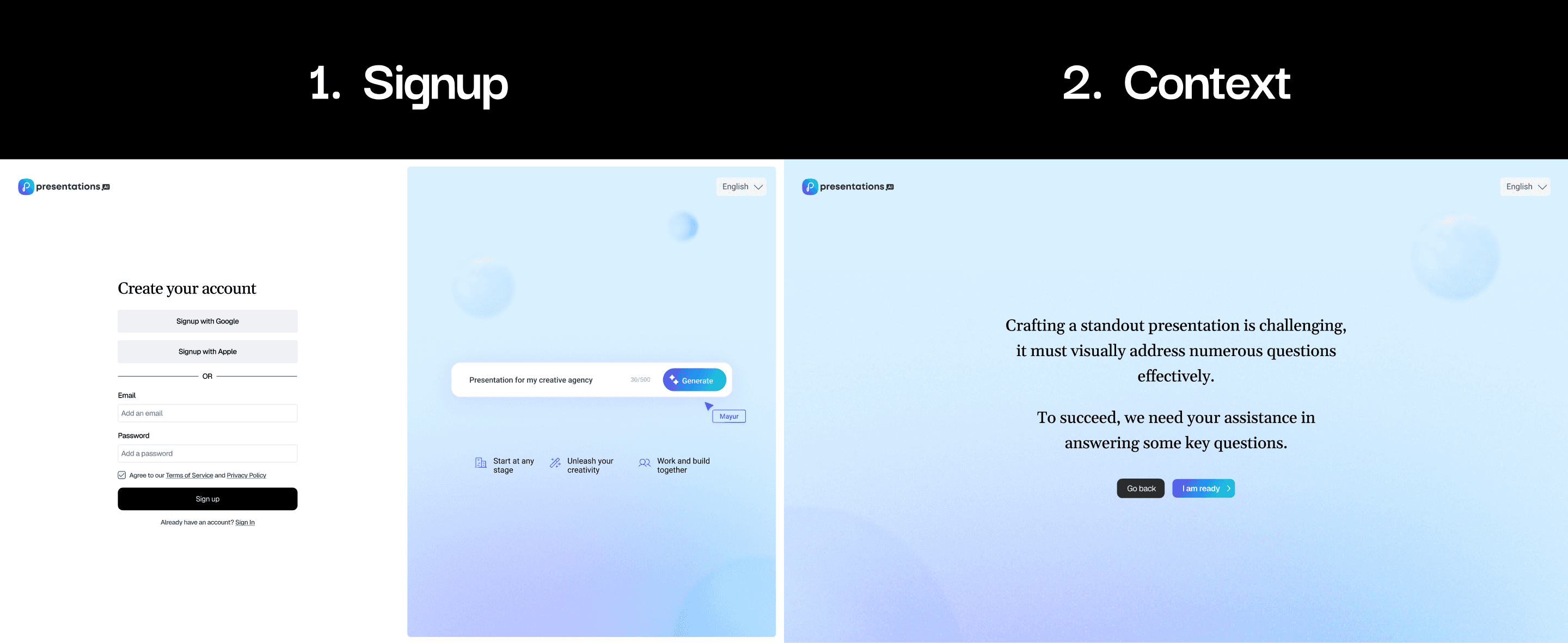

Legitimizing the Steps: Introduced a screen that explicitly explained why the questions were needed ("To succeed, we need your assistance..."). This demonstrated strong Communication Skills by proactively managing user expectations.

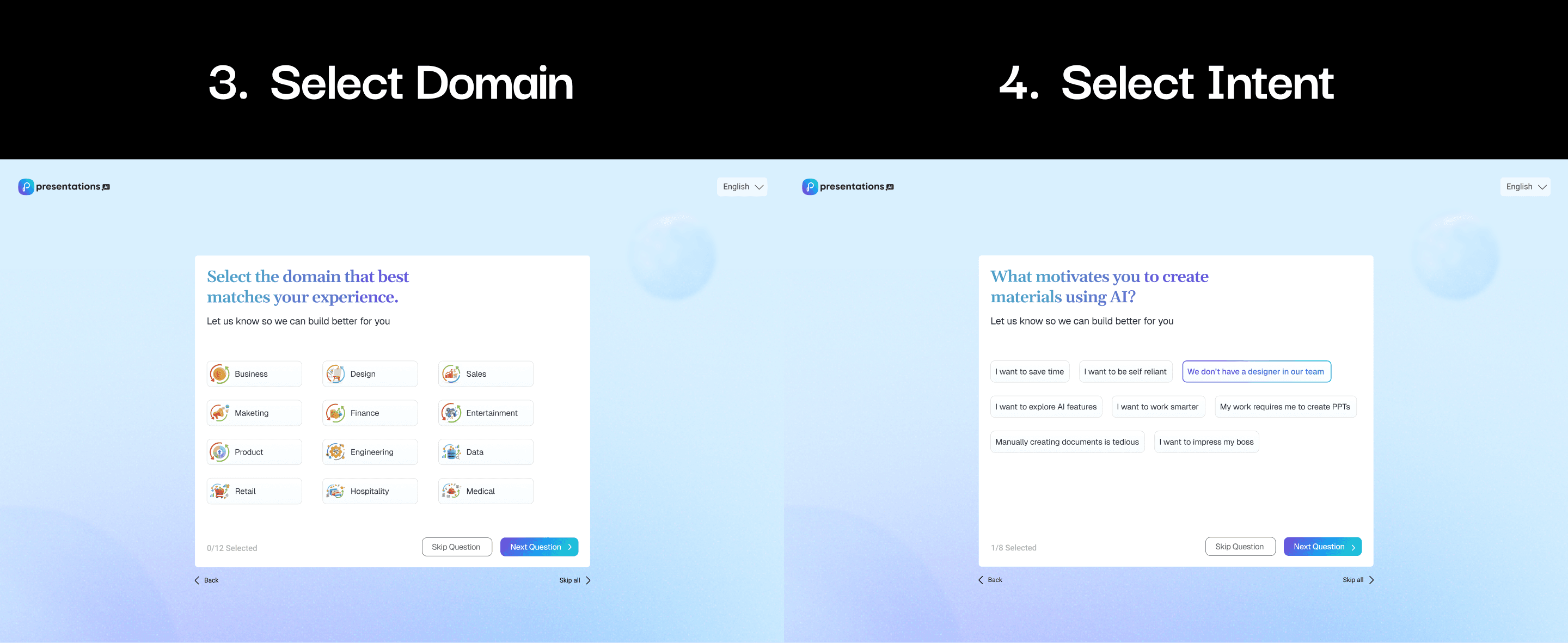

Intent Questions (Motivation/Domain): Replaced the low-value "Role/Position" flow with Domain and Motivation questions . This demonstrated Problem-Solving Skills by collecting data more valuable to an LLM (e.g., "I want to impress my boss").

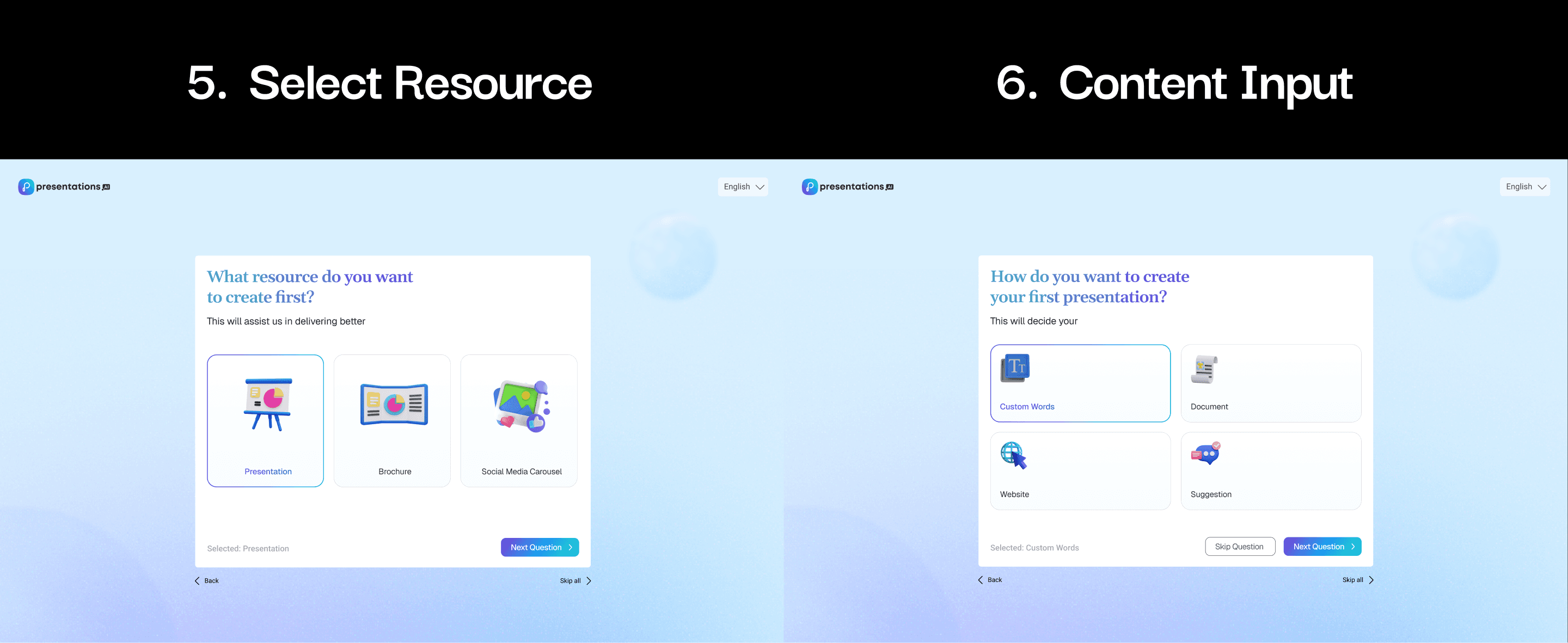

Creation Method: Offered clear options like Custom Words, Document, or Website , catering to users who already had content.

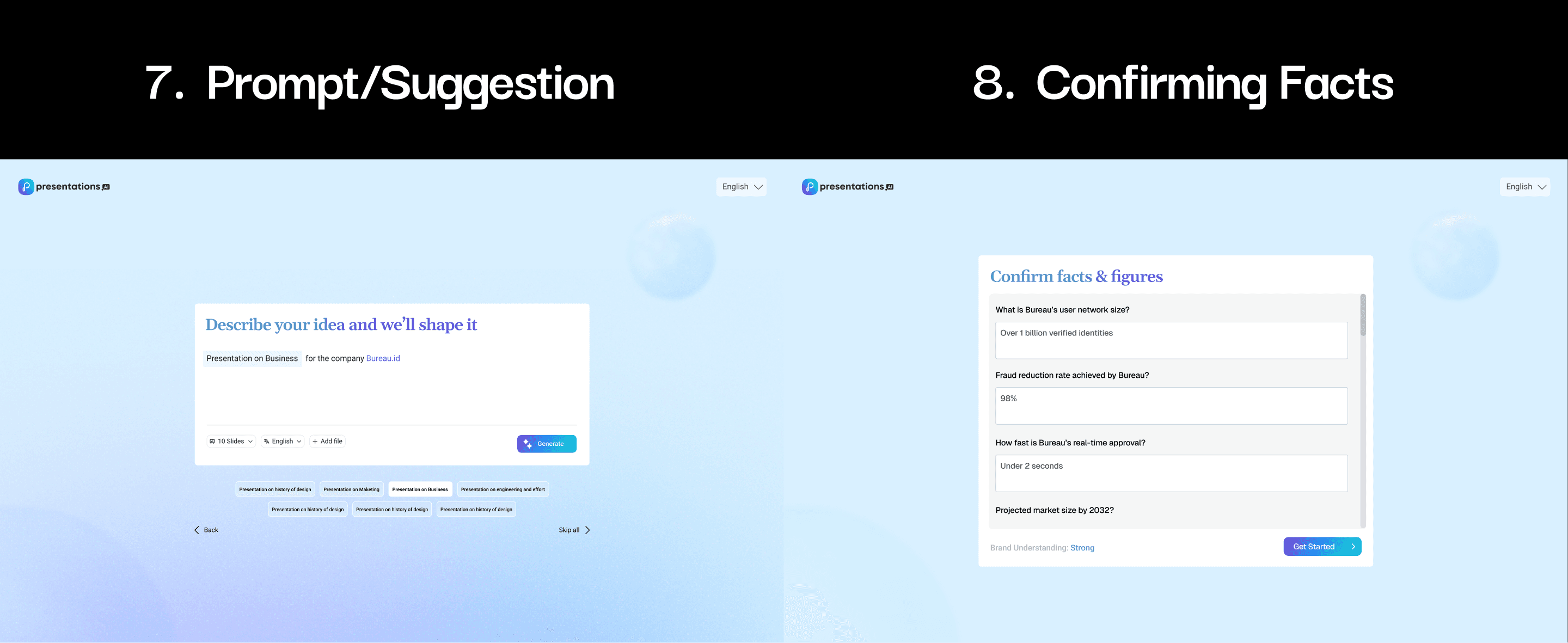

Confirm Facts & Figures (The Core Fix): This critical new step actively provided visual feedback and ensured content quality. The AI pre-fills facts (e.g., "Over 1 billion verified identities"), and the user confirms or edits them, validating the input and providing an immediate "Brand Understanding: Strong" confidence score.

Step 6: Conclusion

Lessons Learned and Reflection

The primary Lesson Learned (Learning Ability) is that in AI UX, Input is King. My initial mistake was assuming users valued design control over content quality. The final solution reflects the strategic realization that a slightly longer, highly contextual flow delivers more value than a short, generic one. In hindsight (Reflection), I would have integrated the content validation step earlier to gather input before the full prompt is written.

Areas for Future Improvements

Implement a persistent, minimalist Progress Indicator throughout the guided questioning process to further address user anxiety.

A/B test the quantity of Motivation and Domain questions to find the optimal balance between data richness and user speed.